Cassian: Agentic Differential Security Review for Pull Requests

How Cassian uses 14 specialized agents to review PRs for security regressions, with an experiment in semi-formal reasoning certificates for structured code analysis

Disclaimer: This post is for educational purposes and authorized security testing only. Only use these techniques against systems you own or have explicit permission to assess, and do not violate laws, contracts, or terms of service.

Table of Contents

- Introduction

- The Harness

- What is Cassian?

- Semi-Formal Reasoning

- The Target: LegalCaseFlow

- The Pipeline

- Agent Reasoning in Practice

- Results

- Takeaways

Introduction

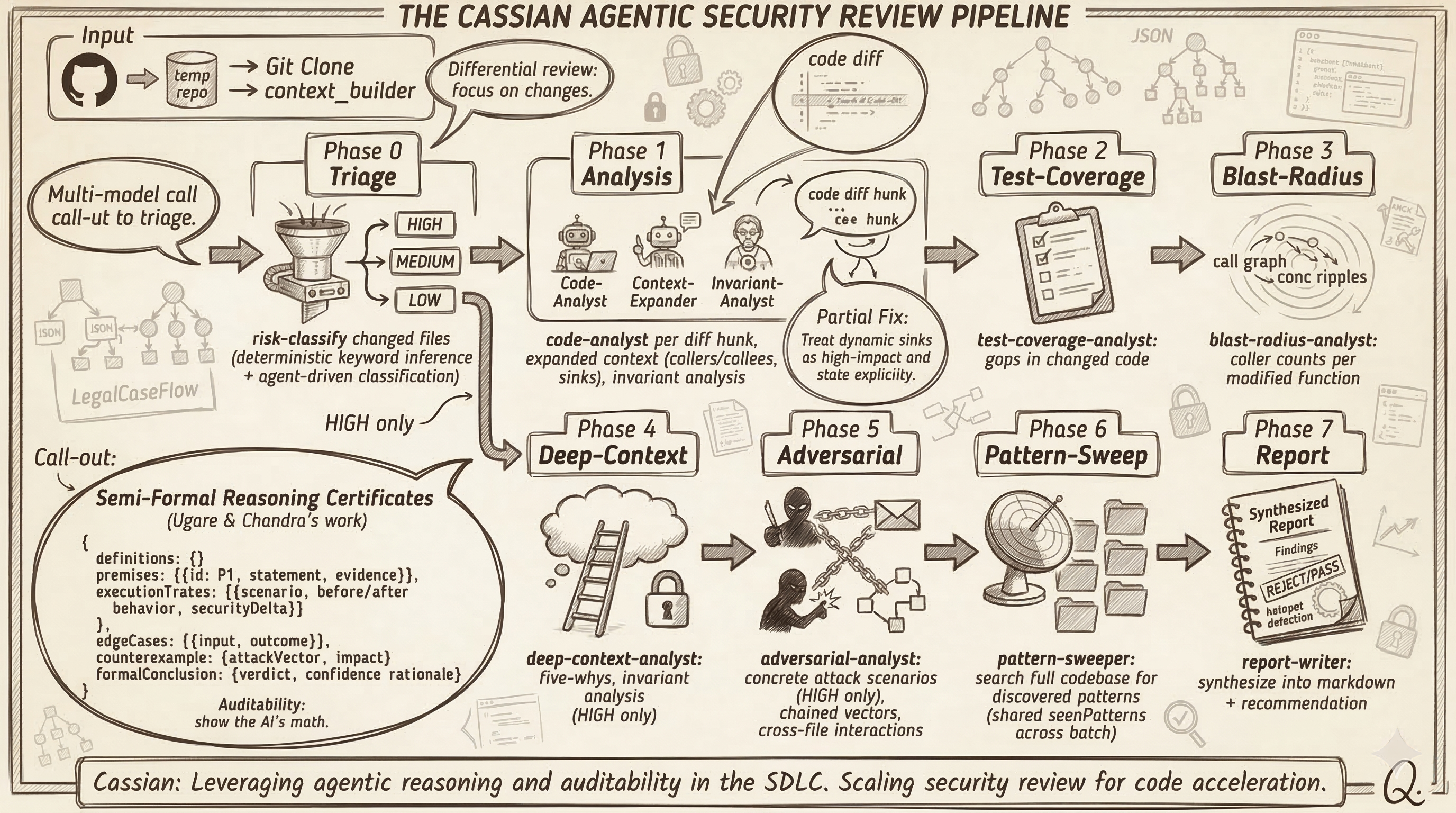

TL;DR — I built Cassian to review remediation PRs after a pentest, using a multi-agent differential review workflow to check whether fixes actually close the issue, surface partial remediations, and prioritize what deserves human attention.

In the ODYSSEUS post, the pipeline ended with a list of confirmed exploits. In a real engagement, that’s where the report gets written and the findings land on someone’s desk. Then what?

Someone has to fix the vulnerabilities. Developers write patches, open pull requests, and those PRs need to be reviewed—not just for correctness, but for whether they actually remediate the issue. Did the fix close the hole, or did it just move the sink one function deeper? Did the developer address the root cause, or just the symptom that the exploit script happened to trigger? And what about the rest of the codebase—if this pattern exists in one handler, does it exist in twenty others?

That’s the gap Cassian fills. It takes a repository and a set of PRs that address security findings and runs a multi-agent differential security review. Not a SAST scan. Not a linter. A structured review that traces execution paths, produces formal security certificates per code change, sweeps the full codebase for discovered patterns, and synthesizes findings into prioritized reports.

The workflow was also directly inspired by Trail of Bits’ differential-review skill, especially the idea of treating changed code, regressions, blast radius, and review depth as first-class parts of the analysis rather than bolting them on after the fact.

The Harness

In the ODYSSEUS post, the “harness” was the FastAPI backend calling Claude Code as a subprocess. It worked, but the orchestration, state management, resilience, and tool registration were all baked into the application code. For the next iteration, I wanted those concerns separated into a reusable framework—something that could host Cassian, or any other agent workflow, without rebuilding the plumbing each time.

The harness is worth its own post, so I’ll keep this brief. The core abstraction:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

export interface Harness {

bus: Bus

agents: typeof Agent

runner: AgentRunner

tools: ToolRegistry

permissions: typeof PermissionNext

approvals: ApprovalManager

sessions: typeof Session

messages: typeof Message

artifacts: typeof Artifact

mailbox: typeof Mailbox

providers: ProviderRegistry

pool: ConcurrencyPool

plugins: PluginManagerImpl

config: ConfigManager

compaction: CompactionService

logger: LogCollector

}

A few things worth calling out.

Agent registration is declarative. You define agents with their model, tools, permissions, step limits, and token budgets, then register them:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

const codex = { providerID: "openai-codex", modelID: process.env.OPENAI_MODEL ?? "gpt-5.2-codex" };

const lockedDownPermissions = PermissionNext.fromConfig({ "*": "deny" });

export const cassianAgents: Record<string, Agent.Info> = {

"context-builder": defineAgent("context-builder", "Build full-repo baseline security context"),

"repo-profiler": defineAgent("repo-profiler", "Profile repository languages, sinks, trust boundaries, and sanitizers"),

"triage-classifier": defineAgent("triage-classifier", "Risk classify changed files"),

"context-expander": defineAgent("context-expander", "Expand changed hunks into call-path and sink-relevant context"),

"code-analyst": defineAgent("code-analyst", "Analyze diff hunks for regressions and vulnerabilities"),

"partial-fix-analyst": defineAgent("partial-fix-analyst", "Detect partial remediation where risky sinks remain exposed"),

"pattern-sweeper": defineAgent("pattern-sweeper", "Sweep codebase for discovered vulnerability patterns"),

"report-writer": defineAgent("report-writer", "Produce structured markdown report"),

"final-report-analyst": defineAgent("final-report-analyst", "Synthesize run-wide analyst priorities"),

};

Multi-model support uses the Vercel AI SDK under the hood. The provider registry wraps @ai-sdk/anthropic, @ai-sdk/openai, @ai-sdk/google, and @ai-sdk/amazon-bedrock with a unified interface. Each provider can have multiple auth profiles with independent failure tracking—if one API key hits a rate limit, the runner rotates to the next active profile.

The resilience engine classifies provider errors (rate_limit, overloaded, timeout, quota, context_overflow) and makes retry decisions with exponential backoff, jitter, and per-profile cooldowns:

1

2

3

4

5

6

7

// Reason-specific retry rules:

// auth → no retry, fail profile

// quota → rotate + disable, exponential backoff

// context_overflow → no retry

// rate_limit → rotate + cooldown, respect Retry-After

// overloaded → conditionally rotate

// server_error → conditionally rotate

This matters when you’re running 14 agents across dozens of PRs. Without resilience at the harness level, a single rate-limit response cascades into a failed pipeline run.

Tool registration uses adapters. You implement a ToolAdapter that returns ToolDefinition objects, and the registry makes them available to agents based on per-agent permission rules:

1

2

3

await harness.tools.registerAdapter(new GitToolAdapter()); // git:clone, git:diff, git:log, git:blame

await harness.tools.registerAdapter(new GhToolAdapter()); // gh:pr-list, gh:pr-view, gh:pr-diff

await harness.tools.registerAdapter(new FilesystemToolAdapter()); // fs:read, fs:grep, fs:glob

Permissions use glob-based pattern matching with last-match-wins semantics. An agent can be given fs:read but denied fs:write. The approval manager adds a lease system on top—once a human approves a tool pattern, that approval is cached for a configurable duration.

The harness also provides a Pipeline abstraction with typed stages, an event bus with 30+ typed events, a plugin system with lifecycle hooks, and stall detection (if an agent produces the same empty output five times, the runner terminates the run). Cassian consumes all of this. It registers its agents and tools, builds a pipeline, and the harness handles execution, concurrency, persistence, and error recovery. The harness is still a work in progress—the pieces described here are functional, but we’re still monitoring performance and effectiveness.

What is Cassian?

Cassian is a differential security review system built on the harness. It defines 14 agents, though only the relevant ones run for a given PR. Given a repository and one or more PRs, it runs across a two-stage pipeline, with a multi-phase review workflow inside each PR analysis, to produce structured security reports.

The key word is differential. Cassian doesn’t scan the entire codebase from scratch—it focuses on what changed in each PR, traces those changes through the codebase to understand their security implications, and sweeps for the same patterns elsewhere. This is closer to how a human security reviewer works: read the diff, understand the context, assess the impact.

1

cassian review --repo https://github.com/org/repo --pr 123 --output ./reports

Or for batch review of everything merged in the last two days:

1

cassian review --repo https://github.com/org/repo --since 2d --output ./reports

The output is a set of per-PR markdown reports, structured JSON findings, and a batch-level final report that identifies hotspot files and priority candidates across all PRs reviewed.

Bootstrap is minimal—Cassian initializes the harness, registers its agents and tools, and builds the pipeline:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

export async function createCassian(config: CassianConfig = {}): Promise<Cassian> {

const defaultModel = config.defaultModel ?? {

providerID: "openai-codex",

modelID: process.env.OPENAI_MODEL ?? "gpt-5.2-codex",

};

const harness = await createHarness({

defaultModel,

providers: [

{

id: "openai-codex",

models: [{ id: defaultModel.modelID, providerID: "openai-codex" }],

auth: [{ id: "codex-oauth", type: "custom", credentials: { apiKey: CODEX_OAUTH_DUMMY_KEY }, status: "active" }],

},

],

approval: { defaultPolicy: "allow", leaseDurations: {}, toolPolicies: {} },

pool: { maxConcurrent: config.pool?.maxConcurrent ?? 3 },

compaction: { enabled: true, threshold: 0.8, preserveRecent: 10, compactionAgentID: "compaction" },

resilience: ResilienceConfigSchema.parse({}),

});

await harness.init();

Agent.clear();

Agent.register(cassianAgents);

await harness.tools.registerAdapter(new GitToolAdapter());

await harness.tools.registerAdapter(new GhToolAdapter());

await harness.tools.registerAdapter(new FilesystemToolAdapter());

}

All 14 agents currently use the same model family—OpenAI Codex with gpt-5.2-codex as the default model ID—and each agent gets a dedicated prompt loaded from a text file that specifies the output JSON schema and reasoning requirements.

Semi-Formal Reasoning

Most agent prompts I’ve seen in security tooling follow a pattern: “You are a security expert. Analyze this code. Find vulnerabilities.” Maybe they add chain-of-thought. The problem is that unstructured reasoning lets the model make unsupported claims—“this input is unsanitized” without actually tracing the data flow, or “this function is safe” without checking edge cases.

Cassian’s prompts are structured around semi-formal reasoning certificates, an approach drawn from Ugare and Chandra’s Agentic Code Reasoning paper (Meta, 2026). The core idea: instead of free-form analysis, force the agent to construct explicit premises, trace execution paths with before/after behavior, check edge cases, search for counterexamples, and derive a formal conclusion.

The code-analyst prompt requires this exact structure for every diff hunk:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

{

"certificate": {

"definitions": {

"securityRegression": "...",

"vulnerabilityIntroduction": "..."

},

"premises": [

{ "id": "P1", "statement": "...", "evidence": "..." }

],

"executionTraces": [

{

"scenario": "...",

"beforeBehavior": "...",

"afterBehavior": "...",

"securityDelta": "SAFER | EQUIVALENT | WEAKER | UNKNOWN"

}

],

"edgeCases": [

{

"input": "...",

"beforeOutcome": "...",

"afterOutcome": "...",

"securityRelevant": true

}

],

"counterexample": {

"exists": false,

"attackVector": "...",

"payload": "...",

"impact": "..."

},

"formalConclusion": {

"verdict": "SAFE | VULNERABLE | UNCERTAIN",

"derivation": "...",

"confidenceRationale": "..."

}

}

}

The structured template acts as an accountability mechanism—every claim must reference a premise, every verdict must cite traced evidence. A human reviewer can verify the reasoning by checking whether each claim is supported, without re-reading the entire codebase.

The tradeoff is cost—semi-formal reasoning uses 2-3x more tokens than standard prompting. For security review where thoroughness matters more than speed, that’s a favorable tradeoff.

The prompt also enforces a link between certificates and findings:

1

2

3

4

5

6

Rules:

- Every finding must include evidenceChain premise IDs.

- If verdict is VULNERABLE, findings must not be empty.

- If verdict is UNCERTAIN and a dangerous sink is touched, emit at

least one LOW/MEDIUM finding that describes uncertainty and review need.

- Treat UNKNOWN deltas on externally reachable paths as security-relevant.

This prevents the agent from producing findings without supporting reasoning, or reasoning that concludes VULNERABLE without actually emitting a finding.

The Target: LegalCaseFlow

For the ODYSSEUS post, the target was OWASP Juice Shop. That was fine for demonstrating the platform, but Claude was almost certainly trained on Juice Shop’s source code, which muddied any conclusions about the agent’s actual analytical capability.

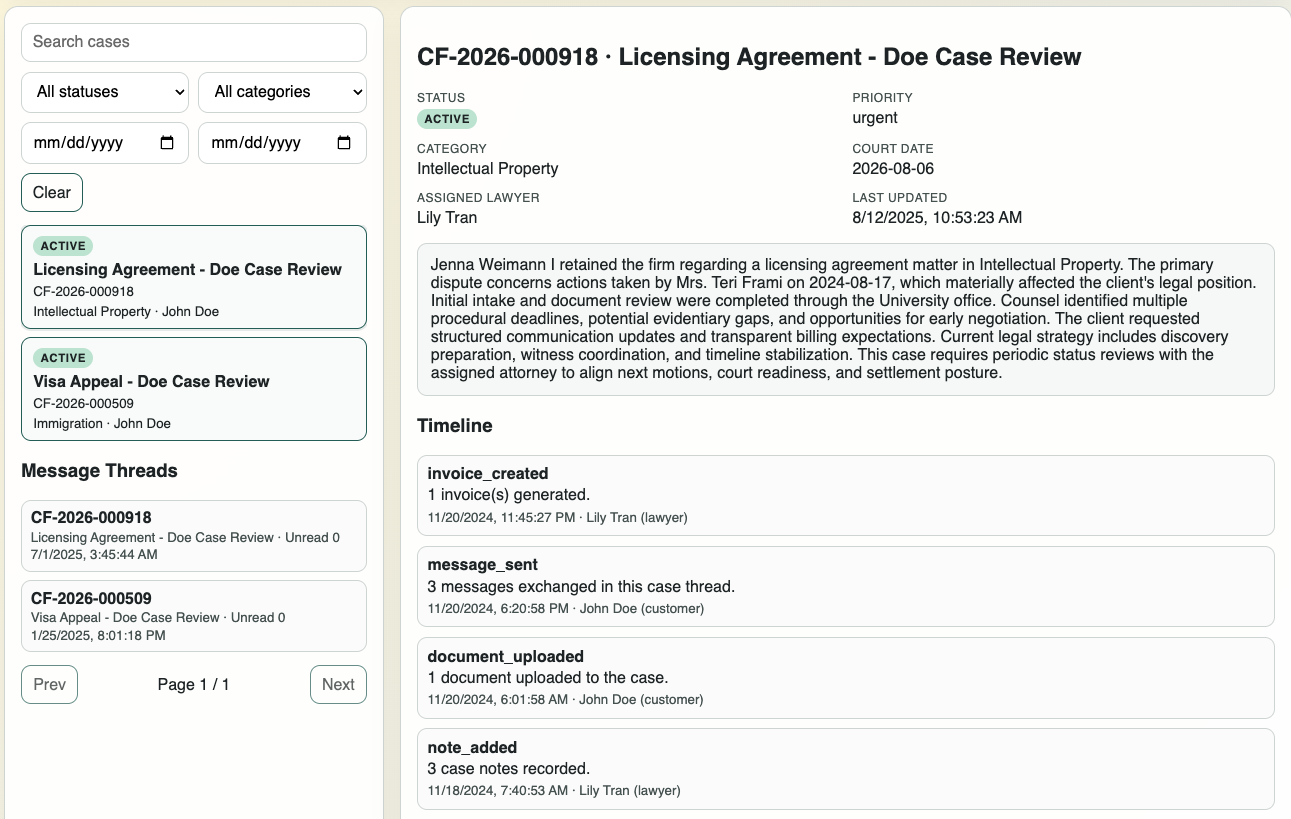

For Cassian, I used a purpose-built vulnerable repository named legalCTF. The application inside that repo is LegalCaseFlow—a deliberately vulnerable legal case management platform built as a custom CTF target instead of reusing something like Juice Shop. It’s a full-stack application with React on the frontend, Express on the backend, PostgreSQL, and Docker Compose for the whole stack. The application implements case lifecycle management, role-based access control (Admin, Lawyer, Paralegal, Client), messaging, billing workflows, and report generation, seeded with 1,000+ realistic test cases.

LegalCaseFlow, the purpose-built

LegalCaseFlow, the purpose-built legalCTF target application used for the Cassian review workflow. LegalCaseFlow was created with LLM assistance for security research and testing. All names, cases, documents, and records shown are synthetic, and any resemblance to real individuals or organizations is coincidental.

The application has a broad attack surface—SQL injection, command injection, XSS, CSRF, authorization bypasses, information disclosure—but for this post, the two vulnerabilities that matter are the ones with corresponding GitHub issues and fix PRs:

Report Variable Override Injection (Issue #1, PR #3) — The report generation endpoint allows user-supplied template variable overrides without filtering prototype pollution vectors (

__proto__,constructor,prototype). PR #3 adds asanitizeReportVariableOverridesfilter.Billing Expression Injection (Issue #2, PR #4) —

calculateBillingEstimateprocesses user-supplied mathematical expressions vianew Function()without input validation, creating a code injection vector. PR #4 addsvalidateBillingExpressionwith length, character, and token checks.

Cassian reviews these fix PRs to assess whether the remediations are complete.

The Pipeline

At the harness Pipeline level, Cassian runs in two stages: a context-building stage and a per-PR review stage. Around that core pipeline, the full run has four operational steps:

1

2

3

4

Step 1: clone / resolve repo → git clone or local path resolution

Step 2: context → context-builder + repo-profiler

Step 3: review PRs → per-PR review in a ConcurrencyPool (max 3 parallel)

Step 4: final report → batch synthesis, hotspot detection, priority candidates

The per-PR review stage is where the detailed work happens. Within that stage, each PR goes through 8 review phases:

Triage and Routing

Before any agent touches a PR, the pipeline classifies changed files by risk. This is partly deterministic—keyword-based inference from file paths—and partly agent-driven via the triage-classifier:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

const HIGH_KEYWORDS = [

"auth", "oauth", "token", "crypto", "cipher", "payment",

"wallet", "transfer", "permission", "rbac", "acl", "middleware",

"api", "controller", "handler", "validation", "sanitize",

"eval", "exec", "template", "render", "merge", "override",

];

export function inferRisk(filePath: string): FileTriage["risk"] {

const path = filePath.toLowerCase();

if (path.endsWith(".md") || path.includes("test")) return "LOW";

if (HIGH_KEYWORDS.some((kw) => path.includes(kw))) return "HIGH";

if (MEDIUM_KEYWORDS.some((kw) => path.includes(kw))) return "MEDIUM";

return "LOW";

}

Routing decisions are deterministic based on triage output:

1

2

3

4

5

6

7

8

9

// Strategy by PR size

if (fileCount < 20) return "DEEP"; // Analyze everything

if (fileCount <= 200) return "FOCUSED"; // Skip LOW risk

return "SURGICAL"; // HIGH + MEDIUM only

// Deep phases (adversarial, deep-context) only for HIGH risk

export function shouldRunDeepPhases(risk: FileTriage["risk"]): boolean {

return risk === "HIGH";

}

For the legalCTF PRs, both were classified as FOCUSED strategy. The export.service.ts file was classified HIGH risk (matches exec, eval, override, sanitize keywords). Test files and configs were classified LOW and skipped.

Diff Hunk Analysis

The pipeline doesn’t analyze files—it analyzes hunks. Each @@ ... @@ block in the diff is extracted with surrounding context and sent independently to the code-analyst. This gives granular findings with precise line attribution and lets the agent reason about the specific behavioral change in each hunk.

The code-analyst receives expanded context from the context-expander (callers, callees, sinks, guards for the changed code) and the repo profile (languages, frameworks, known sanitizers). It produces a semi-formal certificate and findings per hunk.

Pattern Sweep

When the code-analyst discovers a vulnerability pattern in a hunk, the pattern-sweeper searches the entire codebase for the same pattern:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

export async function sweepPatterns(

findings: Finding[],

seenPatterns: Set<string>,

search: PatternSearchFn,

): Promise<PatternSweepResult> {

const discovered: Finding[] = [];

for (const finding of findings) {

if (!finding.pattern) continue;

const pattern = finding.pattern;

if (seenPatterns.has(pattern.id)) {

patternsSkipped++; // Already swept by a prior PR in this batch

continue;

}

seenPatterns.add(pattern.id);

patternsSwept++;

const matches = await search(pattern);

for (const match of matches) {

const derived: Finding = {

...finding,

title: `Pattern recurrence: ${pattern.description}`,

file: match.file,

lineNumber: match.lineNumber,

description: match.description,

source: "pattern-sweep",

parentFindingId: finding.id,

};

// Deduplicate by (file, lineNumber, pattern.id)

discovered.push(derived);

}

}

}

The seenPatterns set is shared across all PRs in a batch run. If PR #3 discovers and sweeps a pattern, PR #4 won’t re-sweep it. Findings are deduplicated by (file, lineNumber, pattern.id) to prevent the same issue from appearing N times.

Cassian also runs a set of baseline patterns regardless of what the code-analyst finds—hardcoded searches for eval(, new Function(, child_process.exec, __proto__, Object.assign(, and other common sinks. This ensures broad coverage even when the per-hunk analysis doesn’t flag a pattern.

Partial Fix Detection

The partial-fix-analyst is one of the more interesting agents. It looks specifically for cases where a guard was added or changed, but the underlying dangerous sink still exists:

1

2

3

4

5

6

7

8

Goal:

- Detect likely partial remediations where a dangerous sink remains reachable.

Requirements:

- Focus on cases where validation/guard changed but sink still exists.

- Treat dynamic execution sinks (eval/new Function/exec/spawn) as

high-impact and state that explicitly in reason.

- Include only plausible security-relevant suspicions.

For the legalCTF billing fix (PR #4), the partial-fix-analyst flagged exactly this: the validateBillingExpression function adds regex-based validation before the new Function() call, but the new Function() sink remains in the code. The validation might be bypassable, so the remediation is potentially incomplete.

The Adversarial Phase

For HIGH-risk files only, the adversarial-analyst models concrete attack scenarios that the per-hunk analysis couldn’t see—cross-file interactions, chained vulnerabilities, and trust boundary violations:

1

2

3

4

5

6

7

8

9

Goal:

- Produce concrete attacker-oriented scenarios for HIGH-risk changes.

- You will receive semi-formal security certificates from prior code analysis.

- Focus on attack scenarios NOT already covered by the certificate counterexamples.

Requirements:

- Each scenario should include attacker capability + path + impact.

- Focus on net-new attack vectors: cross-file interactions, chained

vulnerabilities, trust boundary violations.

This layered approach—code-analyst finds per-hunk issues, adversarial-analyst finds cross-cutting issues—mirrors how a human review team works. The analyst reads the code; the adversarial thinker asks “what did the analyst miss?”

Agent Reasoning in Practice

Here’s what the semi-formal certificates look like on real code. From PR #3’s review of the sanitization fix:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

**Premises**

- **P1**: A new sanitization helper excludes keys listed in UNSAFE_OVERRIDE_KEYS.

_(Added function sanitizeReportVariableOverrides iterates entries and

skips keys when UNSAFE_OVERRIDE_KEYS.has(key) is true.)_

- **P2**: The function only filters keys and preserves values for allowed keys.

_(sanitized[key] = value is executed for all non-unsafe keys.)_

**Execution Traces**

- **Caller uses sanitizeReportVariableOverrides before applying overrides.**

before=No explicit helper to filter unsafe override keys in this file segment.

after=Overrides with keys in UNSAFE_OVERRIDE_KEYS are removed. → **SAFER**

**Edge Cases**

- Input: `rawVariables containing only unsafe keys`

before=No filtering helper existed in this scope.

after=Returns empty object. **(security-relevant)**

- Input: `rawVariables with mixed safe and unsafe keys`

before=No filtering helper existed in this scope.

after=Unsafe keys removed; safe keys preserved. **(security-relevant)**

**Formal Conclusion**

- Verdict: **SAFE**

- Derivation: P1–P2 show added filtering of unsafe override keys without

introducing risky behavior; no dangerous sink modified.

- Confidence: Change is additive and sanitizing; absence of caller context

limits proof of effective use but no regression observed.

The certificate concludes SAFE for this specific hunk. But the uncertainty findings section adds nuance:

1

2

3

4

5

## Uncertainty Findings

- Prototype pollution risk may persist through nested override objects

(export.service.ts:169) [MEDIUM] — sanitizeReportVariableOverrides

filters only top-level keys; if applyContextOverrides performs deep merge,

__proto__/constructor/prototype could still be supplied in nested structures.

This is exactly the kind of finding that distinguishes structured reasoning from a SAST scan. The agent concluded the hunk itself is safe, but flagged that the fix might be insufficient because it only sanitizes top-level keys. A deep merge downstream could still be vulnerable to nested prototype pollution. That’s a real finding that requires human review.

PR #4’s billing fix produced a similar pattern—the certificates all concluded SAFE (validation was strictly added, no existing checks weakened), but the risk signals section flagged the core issue:

1

2

3

4

5

6

7

8

9

10

11

12

13

## Risk Signals

- [HIGH] Dynamic expression evaluation still uses Function constructor

(export.service.ts:514) — Billing expressions are evaluated via the

Function constructor, which is a dynamic execution sink. New validation

reduces risk but does not eliminate it if bypassed or incomplete.

## Partial Fix Suspicions

- export.service.ts:514

sink=new Function(...variableNames, `return (${normalizedExpression});`)

guard=Added validateBillingExpression checks (length/allowed chars/

blocked tokens/function and property access) before evaluation.

— High-impact dynamic execution sink (new Function) remains reachable;

validation is regex-based and may be bypassed.

The agent is saying: the fix adds guards, and those guards are directionally correct, but the fundamental architecture—user input reaching new Function()—hasn’t changed. The validation is regex-based, and regex-based validation of expression syntax is historically brittle.

Results

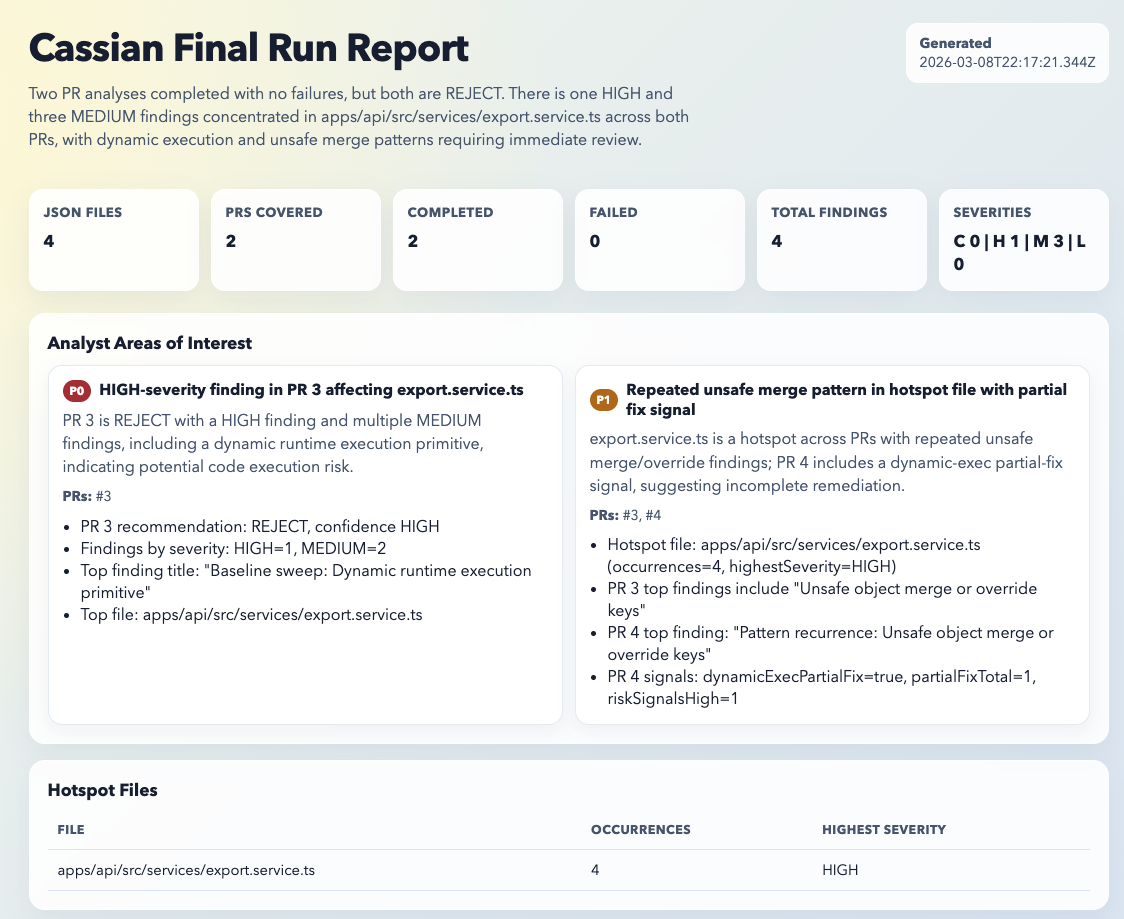

Against the legalCTF repository, the synthesized final report covered 2 PRs and surfaced:

- 4 findings total: 1 HIGH, 3 MEDIUM

- 2 REJECT recommendations (both PRs)

- 1 hotspot file:

apps/api/src/services/export.service.ts - 1 explicit partial-fix suspicion, in PR #4

The final report synthesizes across PRs and identifies analyst areas of interest by priority:

- P0: HIGH-severity dynamic execution finding in PR #3—review immediately before merge

- P1: Repeated unsafe merge pattern across both PRs with partial fix signal in PR #4—verify PR #4 fully remediates

PR #3 produced three surfaced findings, all from pattern sweep output around dynamic execution and unsafe override handling. Its changed hunks were generally assessed as safe or safer, but the report still raised uncertainty about nested override handling and whether related dynamic execution paths remained reachable elsewhere in the file.

PR #4 produced one surfaced finding in the final report, plus the more important signal: an explicit partial-fix suspicion. Cassian recognized that validation had been added before billing expression evaluation, but the underlying new Function() sink still remained in place, so the remediation was treated as directionally positive but potentially incomplete.

The most valuable output wasn’t just the findings themselves. It was the distinction Cassian made between “this hunk is directionally safer” and “this remediation may still be incomplete.” A traditional SAST tool would either flag the raw new Function() call with no PR context or miss the nuance entirely. The semi-formal reasoning let the agent separate local hunk safety from broader remediation sufficiency.

Takeaways

Differential Review as a Cost Control

Analyzing a full codebase with 14 agents is expensive. Differential review is the cost control. By focusing on what changed in each PR and only sweeping the full codebase for discovered patterns (not all possible patterns), Cassian keeps the token budget manageable. The routing logic adds another layer—DEEP analysis for small PRs, SURGICAL for large ones, deep phases only for HIGH-risk files. You’re paying for agent reasoning where it matters most.

Semi-Formal Reasoning Changes the Output Quality

The difference between “this code has a vulnerability” and “here is a traceable chain of premises, execution traces, and edge cases that concludes VULNERABLE with this specific counterexample” is the difference between a SAST alert and a security review. The certificates make agent output auditable. A human reviewer can check whether P1 actually supports the conclusion without re-reading the source.

The Scale Problem

This is the broader takeaway. As code generation increases PR volume, manual security review will not keep pace. Traditional SAST tooling will not fully keep pace either, because the problem is no longer just spotting known sink patterns in isolation. It is understanding whether a generated fix actually closes the issue, whether it only moves the risk, and whether the same pattern is now spreading across the codebase.

Cassian reviewed 2 PRs against a modest codebase and produced a run-level synthesis that surfaced a hotspot file, a repeated pattern, and an explicit partial-fix signal. That is where LLM-based review becomes useful: not as a magic replacement for security engineering, but as a way to add semantic, evidence-backed analysis at a scale that manual processes and conventional static rules struggle to match.

There is a broader push across the space toward exactly this loop: find issues at scale, then verify fixes at scale. Anthropic’s recent 0-days post also highlights a complementary commit-review lens, where past fixes and security-relevant commits become a way to uncover adjacent bugs that one-shot scans and static rules can miss.

The next step is integrating this into the SDLC. A GitHub Action that triggers Cassian on every security-labeled PR is the obvious path. Or expose it as an API so it slots into existing agentic remediation workflows—a remediation agent fixes the vulnerability, opens a PR, and Cassian reviews it automatically before a human ever looks at it.

A word of caution though: this comes with real caveats. Don’t blindly auto-accept a PR because an agent says the fix looks good. You’d want to run this against a corpus of previous findings first, establish baseline metrics for accuracy and false-negative rates, and set thresholds that must be met before even considering automated approval. As a first pass that gives the reviewing engineer a structured starting point, though, this is exactly the kind of leverage point that starts to make sense.

The agents aren’t perfect. They miss things. They occasionally produce confident-but-wrong conclusions. And do you even need this many agents? I’ll leave that as an exercise for the reader.

But this is the level at which you need to be thinking about your processes across the pentest engagement lifecycle. The semi-formal structure makes the errors detectable—you can trace the reasoning and find where it went wrong, rather than staring at an opaque “HIGH severity” label and wondering if it’s real.

Update (3-13-2026)

Following a question about cost and runtime, I repeated the review six times while varying tool placement and pipeline structure across runs. The target was the same legalCTF repository — roughly 18.5K lines across 73 source files. The runs averaged 5.3 minutes, roughly 107 tool calls, and roughly $2.02 each. Five of six completed successfully; one had a PR-level analysis failure.

More notable than the averages was how much the output varied across runs. Total findings ranged from 2 to 6. PR #3 carried the only HIGH-severity finding in four of five runs (80%). PR #4 had zero confirmed findings in three of five runs (60%), but was still rejected each time on uncertainty and partial-fix signals. All runs identified the same core issue class and the same hotspot file.

The variation tracked to workflow design, not model capability. The pattern-sweeper could expand a single weak pattern into multiple reportable findings in the same file — the hotspot was correct, but recurrence matches were counted alongside the main finding instead of being collapsed. Restructuring the sweep phase to collapse recurrence matches into a single representative finding per file led to more consistent results.

References

- Agentic Code Reasoning — Ugare and Chandra (Meta, 2026). The semi-formal reasoning methodology used in Cassian’s prompts

- ODYSSEUS: Building an Agentic Pentest Platform — The previous post in this series

- Vercel AI SDK — The multi-model SDK used by the harness

- Trail of Bits Differential Review Skill — Inspiration for the differential review methodology

- OWASP Juice Shop — The target used in the ODYSSEUS post